Overview of Our Research

Embodied AI

As artificial intelligence systems increasingly interact with the physical world, the field of Embodied AI focuses on developing agents that can perceive, reason, and act within dynamic environments.

Read More ➝Moving beyond static datasets, it tackles the core challenge of integrating sensory understanding with physical action to achieve complex tasks. Covering areas from robotic navigation to interactive task completion, our core exploration centers on building intelligent systems that learn from and adapt to the real world through embodied experiences.

Vision Language Action Models

The integration of vision, language, and action represents a frontier in creating generalist robotic agents. Vision-Language-Action (VLA) models are challenged by the need to ground linguistic instructions and visual perception into precise, sequential physical actions. Spanning the domains of multimodal learning, robot control, and real-world deployment.

Vision Language Models

Vision-Language Models (VLMs) aim to bridge visual perception and natural language understanding, enabling machines to interpret, reason about, and communicate complex visual information. By jointly modeling images and text, VLMs move beyond isolated visual recognition or language processing, addressing the fundamental challenge of multimodal grounding and alignment. This paradigm supports a wide range of capabilities, from visual question answering and image captioning to multimodal reasoning and instruction following. Our research advances VLMs through scalable pretraining, robust cross-modal grounding, and efficient adaptation to downstream embodied applications, bridging the gap between passive understanding and active engagement with the world.

Advanced Computing Architectures

As modern artificial intelligence systems continue to scale, traditional computing architectures are increasingly constrained by efficiency, latency, and energy consumption.

Read More ➝Emerging computing architectures aim to fundamentally balance the interaction between algorithms, hardware, and systems, enabling efficient and scalable execution of deep learning and large language models across cloud, edge, and resource-constrained platforms. Our research explores architecture–algorithm co-design paradigms that bridge theoretical model advances with practical hardware realizations.

Deep Learning and LLM Accelerators

While deep learning and large language models have demonstrated remarkable success across a wide range of real-world applications, their rapidly growing computational and memory demands pose significant challenges to conventional hardware platforms. Most existing models are designed with limited awareness of hardware constraints, leading to inefficiencies in performance, energy consumption, and scalability. To address these challenges, we focus on the co-design of hardware-friendly model architectures and specialized accelerators, alongside system-level optimization techniques for efficient deployment. Our research spans accelerator microarchitecture, dataflow optimization, and software–hardware interfaces, aiming to enable high-performance and energy-efficient execution of deep learning and LLM workloads, particularly for edge and embedded environments.

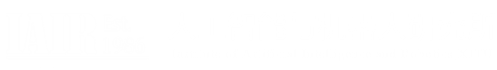

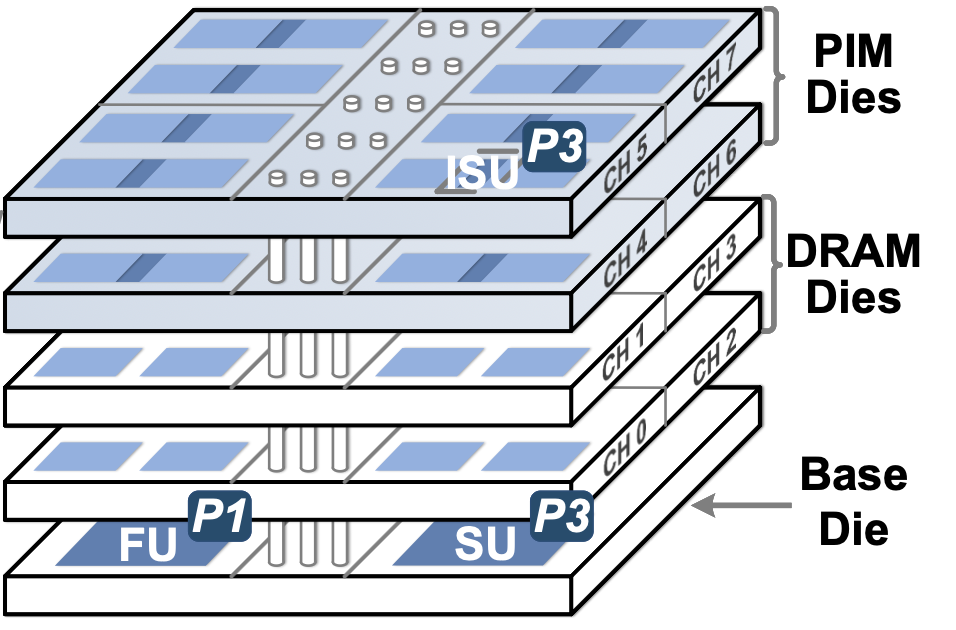

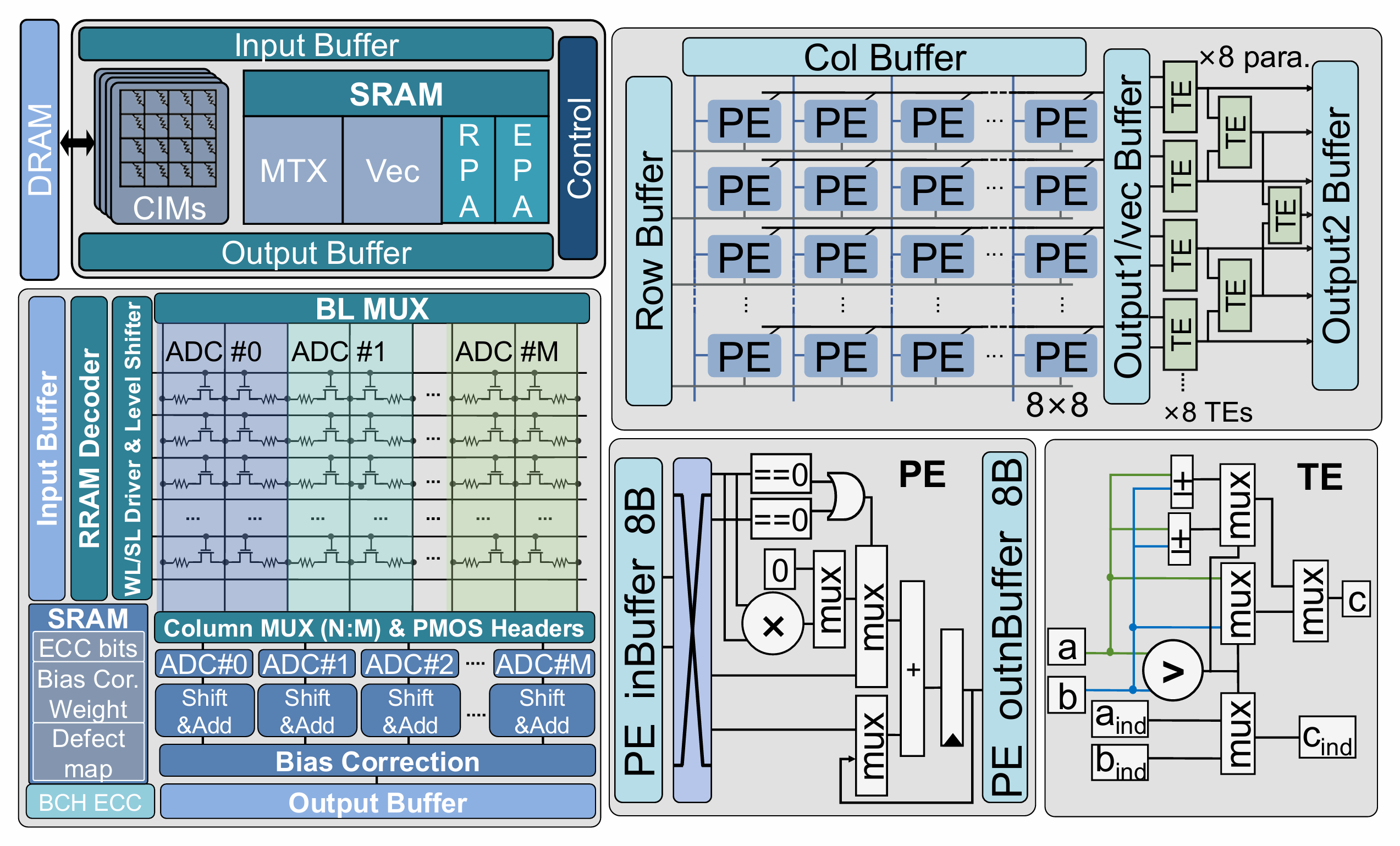

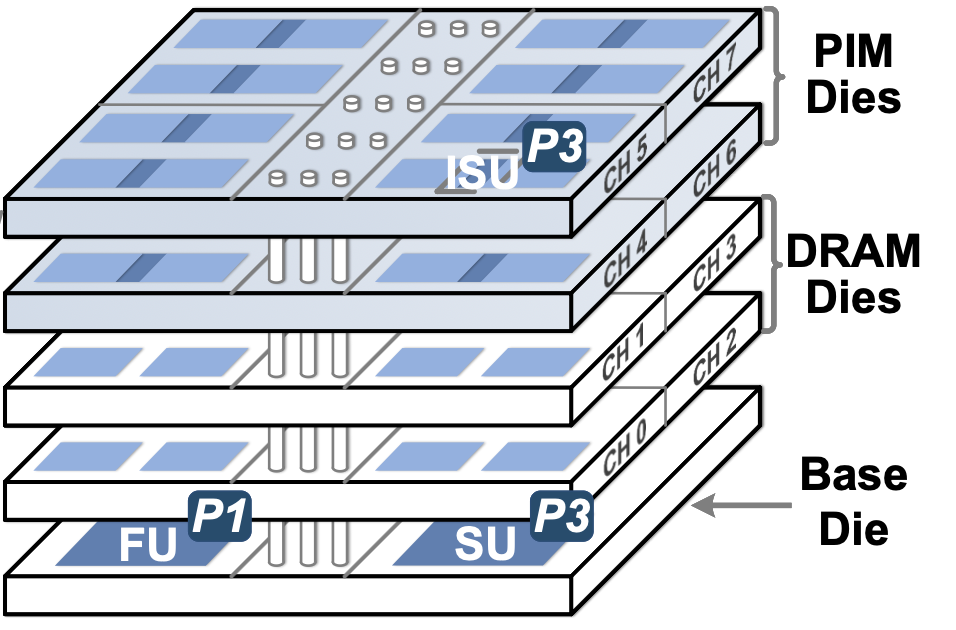

In-Memory and Near-Memory Computing

The increasing dominance of data movement costs has exposed fundamental limitations of the traditional von Neumann architecture, especially for data-intensive AI workloads. In-memory and near-memory computing paradigms seek to alleviate this bottleneck by tightly integrating computation with memory, reducing data transfer overhead and improving energy efficiency. Our research investigates compute-in-memory architectures, mixed-signal and digital designs, and algorithm–hardware co-optimization strategies, with a particular emphasis on neural network inference and learning. By exploring novel memory devices, architectural abstractions, and system-level integration, we aim to unlock new pathways toward scalable and efficient AI computing beyond conventional architectures.

Efficient AI

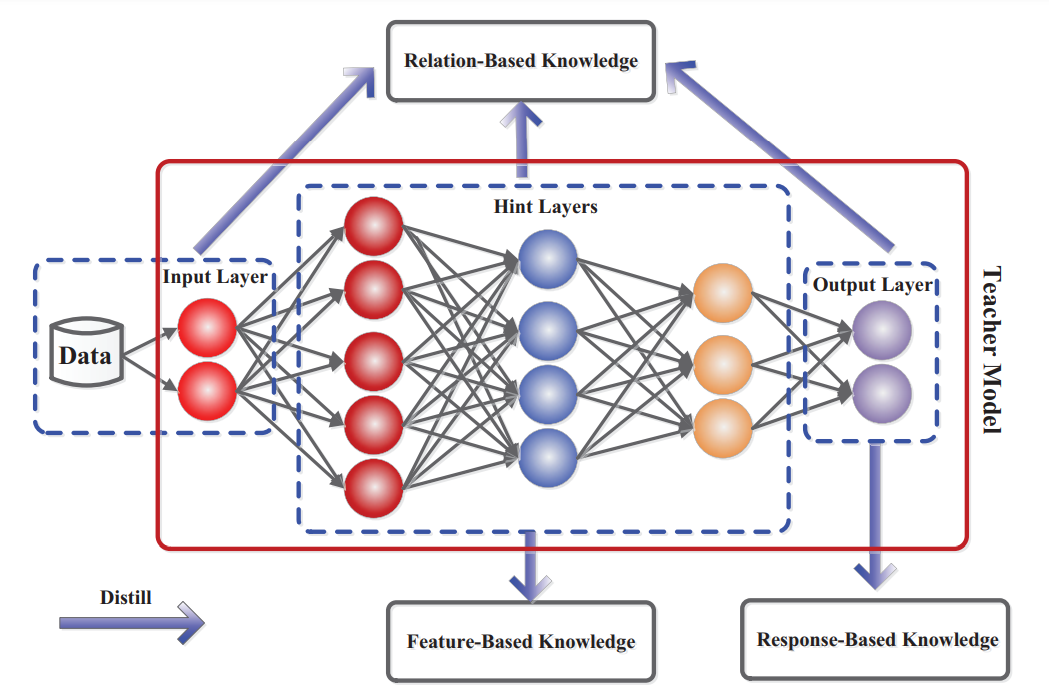

Efficient AI addresses the critical challenge of maximizing model performance while minimizing computational cost, energy consumption, and memory footprint.

Read More ➝As state-of-the-art AI models grow exponentially in size and resource requirements, their deployment in real-world, resource-constrained settings becomes profoundly difficult. Efficient AI addresses the critical challenge of maximizing model performance while minimizing computational cost, energy consumption, and memory footprint. Spanning techniques from algorithm design to hardware-aware optimization, our central mission is to develop methodologies that make advanced AI capabilities accessible, sustainable, and scalable.